Quantum Computing

There's a new paradigm in computing, but it won’t improve your smartphone anytime soon.

Computers are firmly entrenched in modern life as indispensable extensions of ourselves. They’re our accountants, our assistants, our destinations for entertainment—they even keep track of our memories.

Within a few years, researchers hope to create an entirely new kind of computer—a practical quantum computer—capable of tackling problems for which your new laptop is about as useful as a cheese grater.

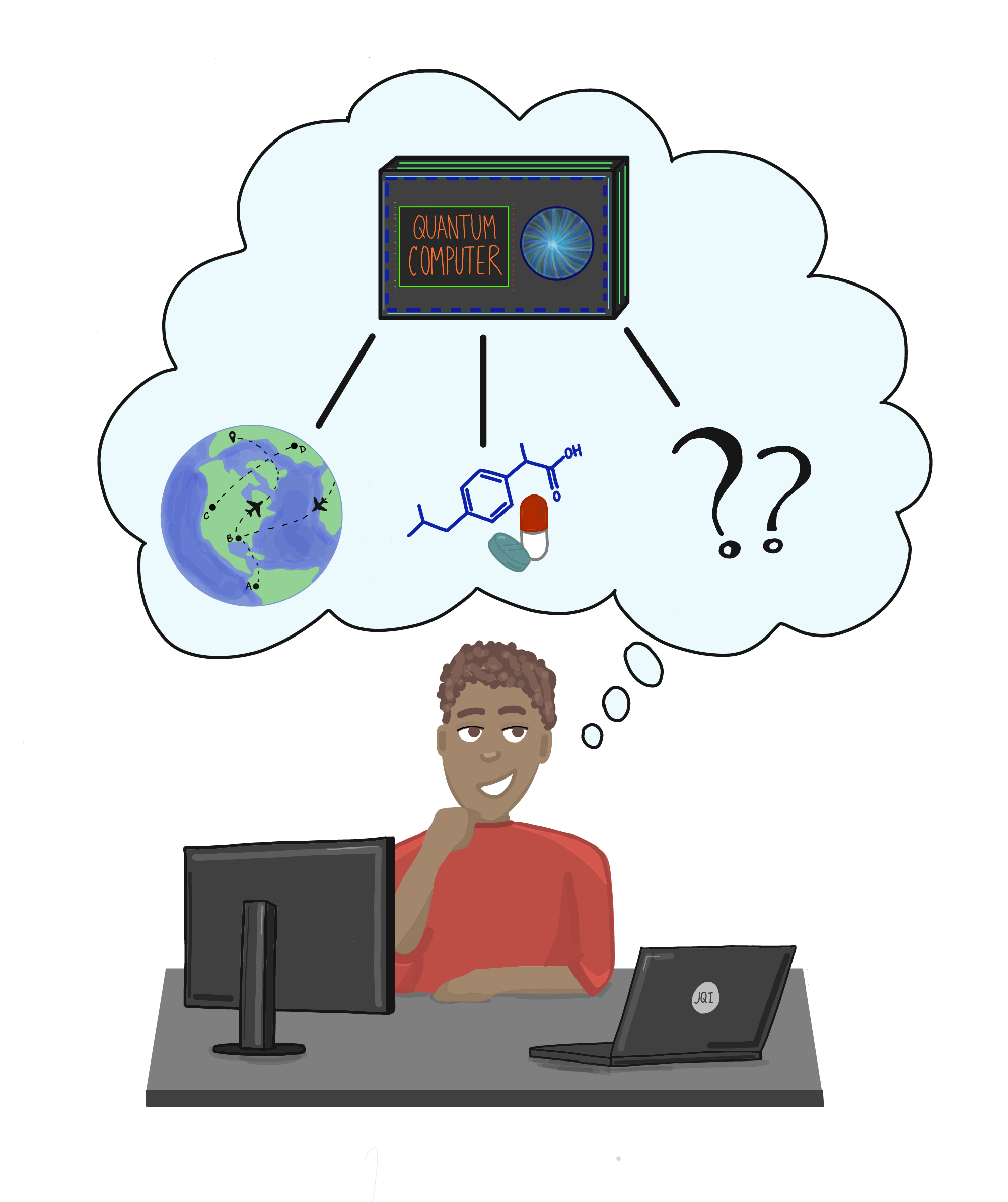

What kinds of problems are we talking about? Scientists have come up with a handful of killer apps, but for the most part no one yet has a clear picture of the full capabilities of quantum computers.

The application that has garnered the most attention since the mid-1990s has to do with a math problem called factoring: A quantum computer could

Quantum computers also offer modest speedups for some special purpose problems, like searching through a database for that one special entry. This application is particularly enticing for companies wanting to sift through large amounts of information, but the time savings would not be as disruptive as in the case of factoring.

For many scientists, the most exciting quantum computing application is the opportunity to study quantum physics itself in a way that wasn’t possible before. Just as ordinary computers can simulate everyday physics—like rocket flights, black holes and trick billiards shots—quantum computers will be able to simulate quantum physics. This may not sound all that interesting, but it’s a problem that chokes today’s best supercomputers, and it’s something for which quantum computers would excel. It could help us develop new materials and better understand nature’s smallest components.

Two key features of quantum physics are responsible for unlocking this potential power. One is superposition, which gives quantum computers a novel way of storing information. In ordinary, “classical” computers, information takes the form of binary digits (“bits”) that can have only one of two values—0 or 1—represented by different electrical charges in a transistor. But superposition means that quantum bits, or qubits, can store 0, 1, or a combination of both values simultaneously.

The other feature essential to quantum computing is entanglement, which connects together two or more quantum objects (photons, electrons, atoms, etc.) in such a way that their quantum properties, including their superpositions, become entwined and inseparable regardless of how far apart they are. Entanglement allows information to spread throughout a quantum computer and

The trick to operating a quantum computer is figuring out how to maintain the entanglement and superposition of many quantum objects for long enough to do something interesting. If you can do that, then a carefully orchestrated series of operations can create just the right interactions between qubits to perform some of the tasks mentioned above.

This all sounds pretty promising, and it is. But to date, there has been a persistent problem: Maintaining the superposition of a single qubit—or the entanglement between many qubits—requires very good isolation from the outside world. Outside contact tends to intrude on the quantum world and destroy its delicate features. This intrusion strips qubits of their quantumness and leaves them in an ordinary